In our fast-paced, technology-driven world, personal data has become a hot topic. Every click, every purchase, every post – they all contribute to the vast data sets that form our digital identities. It’s like a digital fingerprint, unique to each one of us. But as we enjoy personalized experiences and targeted ads, we also find ourselves facing significant questions about ethical algorithms and data privacy, particularly in the context of diversity, equity, and inclusion (DEI).

Step 1: Decoding Algorithms and Data Privacy

Let’s start with the basics. Algorithms are like the unseen architects of our digital experiences, sets of instructions that computers follow to solve problems. They shape our digital experiences, from search engine results to social media feeds. And personal data? That’s the fuel for these algorithms. Data privacy refers to the rights and practices concerning the handling of personal data. According to a Pew Research Center survey, 79% of American adults are concerned about how companies use their data. This is our first step towards exploring their implications in DEI.

Business people protect personal information. Encryption with a padlock icon on the virtual interface

Step 2 : Unveiling Ethical Challenges in Algorithmic Decision-Making

Here’s where things get tricky. When algorithms play a significant role in decision-making processes, ethical issues arise. It’s like a mirror reflecting societal biases, perpetuating inequalities, and reinforcing discriminatory outcomes against certain demographics. This issue is exemplified in various domains, including hiring processes, where algorithms have exhibited gender and racial biases. The documentary “Coded Bias” clearly presents how these biases can impact marginalized communities.

High-profile data privacy breaches, like the Cambridge Analytica scandal, also present ethical concerns. These incidents underscore the need for stringent data protection regulations and responsible data handling practices. It’s like leaving your front door wide open, inviting anyone to come in and take what they want for profiling and manipulation.

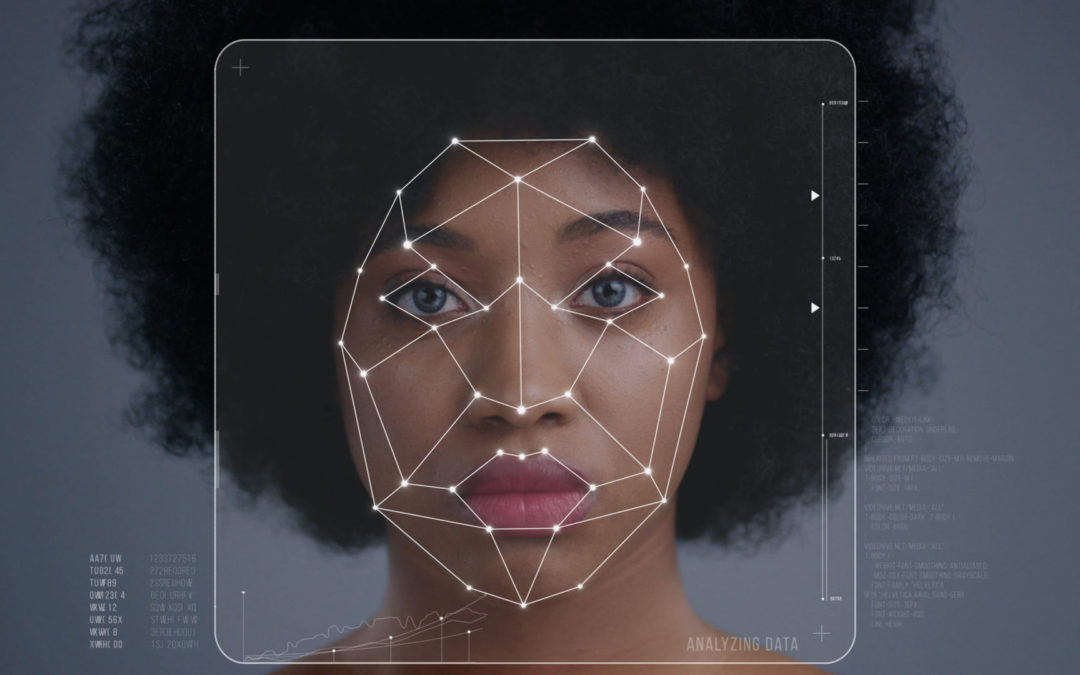

Facial recognition technology has faced scrutiny for its potential misuse and discriminatory impact. Studies have revealed higher error rates for certain racial and ethnic backgrounds, such as people of color, leading to unjust treatment and false identifications within law enforcement and surveillance systems. It’s like a faulty ID scanner that keeps misidentifying people.

Data privacy concerns also disproportionately affect marginalized communities due to a lack of adequate protections. A study by Carnegie Mellon University revealed that LGBTQ+ individuals often face online harassment due to personal data misuse. It’s like being singled out in a crowd simply because of who you are.

Transparency and explainability are key aspects of addressing ethical challenges. Algorithmic decision-making processes often operate as “black boxes,” making it difficult to comprehend how decisions are reached. This lack of transparency hampers accountability and impedes the detection and rectification of biases.

Representation in data is crucial for addressing algorithmic bias and promoting diversity, equity, and inclusion in the tech industry. When data sets predominantly represent a specific group, it perpetuates bias and disadvantages underrepresented communities. These challenges highlight the need for proactive measures in the tech industry to combat algorithmic bias and data privacy concerns.

Step 3: Recognizing the Role of Technology Companies and Policymakers

Technology companies and policymakers play a pivotal role in promoting ethical algorithms and data privacy. It’s like a dance, and recognizing their responsibility is crucial to fostering a more inclusive and equitable digital landscape. Technology companies bear the responsibility of developing and deploying ethical algorithms. While industry leaders like Google and Microsoft have taken steps towards addressing algorithmic biases and promoting transparency, they are not alone in facing ethical challenges. There is still work to be done.

Let’s take a look at some other players in the tech industry. Uber, for example, faced backlash for its use of the “Greyball” tool, which was designed to evade law enforcement and regulatory authorities in certain cities. Additionally, Uber has faced allegations of potential algorithmic biases in its ride-hailing service, leading to concerns of discrimination and unequal treatment.

TikTok, another prominent platform, encountered controversy over privacy issues and allegations of algorithmic bias and content moderation. Questions were raised about the platform’s data handling practices and the potential impact of its algorithms on content visibility and reach.

These instances demonstrate that the ethical challenges surrounding algorithms and data privacy extend beyond a few notable companies. It’s a widespread issue that needs to be addressed across the board. It is crucial for all tech companies to prioritize ethical considerations, transparency, and fairness in their algorithmic systems.

To achieve this, collaboration between technology companies, policymakers, and relevant stakeholders is paramount. Stricter regulations, such as the European Union’s General Data Protection Regulation (GDPR), provide a framework for protecting user privacy and holding companies accountable. Proposed legislation like the Algorithmic Accountability Act aims to address bias and discrimination in algorithmic decision-making.

Through collective efforts and a commitment to ethical practices, the tech industry can drive positive change by fostering algorithms that are equitable, transparent, and inclusive. It’s like a team effort where everyone has a part to play in shaping a more ethical future.

“In the pursuit of a more inclusive and equitable digital landscape, it is crucial to advocate for steps that prioritize ethics in algorithms and data privacy. This includes transparency, auditing, regulation, and the active inclusion of marginalized communities.”

Programmers cooperating at an IT company developing apps

Step 4: Advocating for Steps Towards a More Ethical Future

Having identified the challenges, the question now is: what can be done to foster a more inclusive and equitable digital landscape? Here are some steps that can be advocated for:

- Transparency: Just as one would expect transparency in any significant transaction, the same should be expected from tech companies. They should provide clear explanations of their algorithmic processes and the data they collect. This gives users the ability to make informed decisions regarding their data.

- Auditing: Regular audits of algorithms are similar to health check-ups for digital experiences. They help identify potential biases and ensure fairness. These audits should be an integral part of responsible algorithmic development.

- Regulation: Just as a game requires rules to function effectively, strict privacy regulations are needed to set the rules for ethical algorithms and data privacy. The European Union’s General Data Protection Regulation (GDPR) is one such example of these necessary regulations. It stands as a prime example of regulations designed to safeguard individuals’ data and privacy rights. Such regulations should encompass principles of fairness, non-discrimination, and accountability in algorithmic decision-making.

- Inclusion: In an ideal world, everyone’s voice would be heard. Inclusive decision-making processes are vital for ethical algorithms. Actively involving marginalized communities in the development and decision-making processes related to algorithms and data privacy ensures that diverse voices, experiences, and perspectives are considered. It needs to be intentional. Diversifying the tech development teams, they’ll be able to better represent the entire community they serve. Research by McKinsey & Company demonstrates that companies with more ethnic diverse leadership teams are 36% more likely to achieve above-average profitability. By embracing diversity, equity, and inclusion, and actively involving marginalized communities in decision-making processes related to algorithms and data privacy, tech companies can work towards creating algorithms that are fair, inclusive, and less prone to perpetuating biases.

- Improving Training Data: The quality of the training data used to develop algorithms can significantly impact their fairness and accuracy. For example, Joy Buolamwini, a researcher at the MIT Media Lab, found that commercial facial-recognition systems from IBM, Microsoft, and Face++ were significantly more accurate in identifying the gender of lighter-skinned and male faces than darker-skinned and female faces. This bias in accuracy was due to the training data, which was overwhelmingly composed of lighter-skinned and male faces. It also happens with large language models. By diversifying the training data, the accuracy of these systems could be improved for all demographic groups.

The journey towards an ethical digital future is comparable to constructing a complex puzzle. Each piece represents a collective effort, a commitment to upholding diversity, equity, and inclusion principles, and the responsible and innovative use of technology. When these pieces come together, they have the potential to create a more inclusive and equitable world where everyone’s rights and dignity are upheld. As we continue to assemble this puzzle, the image of a better digital future begins to take shape. It’s a process that invites reflection, questioning, and dialogue about the kind of digital world that should be aspired to. The conversation about the future of tech is ongoing, and every voice contributes to the broader picture.

As we continue to place each piece, the insights gained will shape the future of the tech industry, with the goal of truly representing and respecting all users. The puzzle is far from complete, and the picture it will ultimately form is still emerging. This is the ongoing challenge and opportunity of creating an ethical digital future.

ABOUT THE AUTHOR

Dolores Crazover is the founder and CEO of DEI & You Consulting.

She is a leading advocate, Certified diversity, equity and inclusion (DEI), and inclusive leadership consultant /facilitator. She is passionate about empowering others, science/technology (⧣womaninSTEM) and entrepreneurship. Dolores is also a multilingual public speaker covering several aspects of DEI, STEM and entrepreneurship. She has spoken at major events hosted by entities such as Women’s Leadership Network (Estée Lauder Companies), and HUB Institute (Sustainable Leaders Forum).

Dolores supports organizations and individuals in unlocking their full potential by leveraging authenticity, DEI, improving collaboration, bringing together collective intelligence as tools for economic growth and corporate sustainable healthy culture shift for all to thrive.

She is a Full-Stack Software Engineer that leverages a strong STEM, entrepreneurship, and community building (DEI) background to design customized, impactful solutions that strive to drive innovation, optimize processes, and enhance lives. I am a problem-solver, detail-oriented, used to working with cross-functional teams, and a “good-vibes seeker.”

Footnotes

- McKinsey Global Institute. (2018). Notes from the AI frontier: Applications and value of deep learning

- The European Data Protection Board. (2022). Guidelines 01/2022 on Data Protection by Design and by Default.

- Rainie, L. (2020). Americans and Privacy: Concerned, Confused and Feeling Lack of Control Over Their Personal Information

- Dastin, J. (2018). Amazon scraps secret AI recruiting tool that showed bias against women. Reuters

- Buolamwini, J., & Gebru, T. (2018). Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

- Coded Bias. (2020). Directed by Shalini Kantayya. 7th Empire Media

- Cadwalladr, C. (2018). The Cambridge Analytica Files: ‘I made Steve Bannon’s psychological warfare tool’: meet the data war whistleblower. The Guardian

- Buolamwini, J., & Gebru, T. (2018). Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

- Raji, I.D., & Buolamwini, J. (2019). Actionable Auditing: Investigating the Impact of Publicly Naming Biased Performance Results of Commercial AI Products

- Google. (2020). Our Approach to AI Principles

- Microsoft. (2022). Responsible AI

- Isaac, M., & Kang, C. (2017). How Uber Deceives the Authorities Worldwide. The New York Times

- Ge, Q., et al. (2016). Racial and Gender Discrimination in Transportation Network Companies. National Bureau of Economic Research

- Hern, A. (2020). TikTok secretly collected device data for months via its Android app. The Guardian

- European Commission. (2016). General Data Protection Regulation (GDPR).

- U.S. Congress. (2019). Algorithmic Accountability Act.

- 2020. Diversity wins: How inclusion matters. McKinsey & Company.